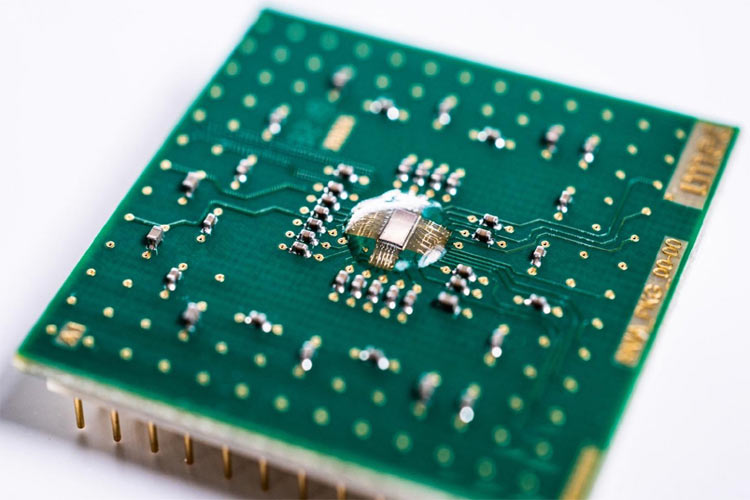

Bringing Deep Neural Network Calculations to IoT Edge Devices, Imec, and Globalfoundaries (GF) have collaboratively come up with a new artificial intelligence chip that is based on Imec’s Analog in Memory Computing (AiMC) architecture utilizing GF’s 22FDX. The new chip is optimized to perform deep neural network calculations on in-memory computing hardware in the analog domain.

There is a key enabler for inference-on-the-edge for low-power devices that helps in achieving record-high energy efficiency up to 2,900 TOPS/W. The privacy, security, and latency benefits of this new technology are expected to have an impact on AI applications in a wide range of edge devices, from smart speakers to self-driving vehicles.

The new architecture eliminates the von Neumann bottleneck by performing the analog computation in SRAM cells. The resulting Analog Inference Accelerator (AnIA), built on GF’s 22FDX semiconductor platform, has exceptional energy efficiency. With the newly launched chip, the pattern recognition in tiny sensors and low-power edge devices (powered by machine learning in data centers) can be performed locally on this power-efficient accelerator. The reference implementation not only shows that analog in-memory calculations are possible in practice, but also that they achieve an energy efficiency ten to hundred times better than digital accelerators.

GF plans to include AiMC as a feature to be implemented on the 22FDX platform for a differentiated solution in the AI market space. GF’s 22FDX employs 22nm FD-SOI technology to deliver outstanding performance at extremely low power, with the ability to operate at 0.5 Volt ultralow-power and 1 pico amp per micron for ultralow standby leakage.